AVSpeechSynthesizer

Though we’re a long way off from Hal or Her, we shouldn’t forget about the billions of people out there for us to talk to.

Of the thousands of languages in existence, an individual is fortunate to gain a command of just a few within their lifetime. And yet, over several millennia of human co-existence, civilization has managed to make things work (more or less) through an ad-hoc network of interpreters, translators, scholars, and children raised in the mixed linguistic traditions of their parents. We’ve seen that mutual understanding fosters peace and that conversely, mutual unintelligibility destabilizes human relations.

It’s fitting that the development of computational linguistics should coincide with the emergence of the international community we have today. Working towards mutual understanding, intergovernmental organizations like the United Nations and European Union have produced a substantial corpus of parallel texts, which form the foundation of modern language translation technologies.

Computer-assisted communication between speakers of different languages consists of three tasks: transcribing the spoken words into text, translating the text into the target language, and synthesizing speech for the translated text.

This article focuses on how iOS handles the last of these: speech synthesis.

Introduced in iOS 7 and available in macOS 10.14 Mojave,

AVSpeech produces speech from text.

To use it,

create an AVSpeech object with the text to be spoken

and pass it to the speak method:

import AVFoundation

let string = "Hello, World!"

let utterance = AVSpeechNSString *string = @"Hello, World!";

AVSpeechYou can use the adjust the volume, pitch, and rate of speech

by configuring the corresponding properties on the AVSpeech object.

When speaking, a synthesizer can be paused on the next word boundary, which makes for a less jarring user experience than stopping mid-vowel.

synthesizer.pause[synthesizer pauseSupported Languages

Mac OS 9 users will no doubt have fond memories of the old system voices — especially the novelty ones, like Bubbles, Cellos, Pipe Organ, and Bad News.

In the spirit of quality over quantity,

each language is provided a voice for each major locale region.

So instead of asking for “Fred” or “Markus”,

AVSpeech asks for en-US or de-DE.

VoiceOver supports over 30 different languages.

For an up-to-date list of what’s available,

call AVSpeech class method speech

or check this support article.

By default,

AVSpeech will speak using a voice

based on the user’s current language preferences.

To avoid sounding like a

stereotypical American in Paris,

set an explicit language by selecting a AVSpeech.

let string = "Bonjour!"

let utterance = AVSpeechNSString *string = @"Bonjour!";

AVSpeechMany APIs in foundation and other system frameworks

use ISO 681 codes to identify languages.

AVSpeech, however, takes an

IETF Language Tag,

as specified BCP 47 Document Series.

If an utterance string and voice aren’t in the same language,

speech synthesis fails.

Not all languages are preloaded on the device, and may have to be downloaded in the background before speech can be synthesized.

Customizing Pronunciation

A few years after it first debuted on iOS,

AVUtterance added functionality to control

the pronunciation of particular words,

which is especially helpful for proper names.

To take advantage of it,

construct an utterance using init(attributed

instead of init(string:).

The initializer scans through the attributed string

for any values associated with the AVSpeech,

and adjusts pronunciation accordingly.

import AVFoundation

let text = "It's pronounced 'tomato'"

let mutableBeautiful. 🍅

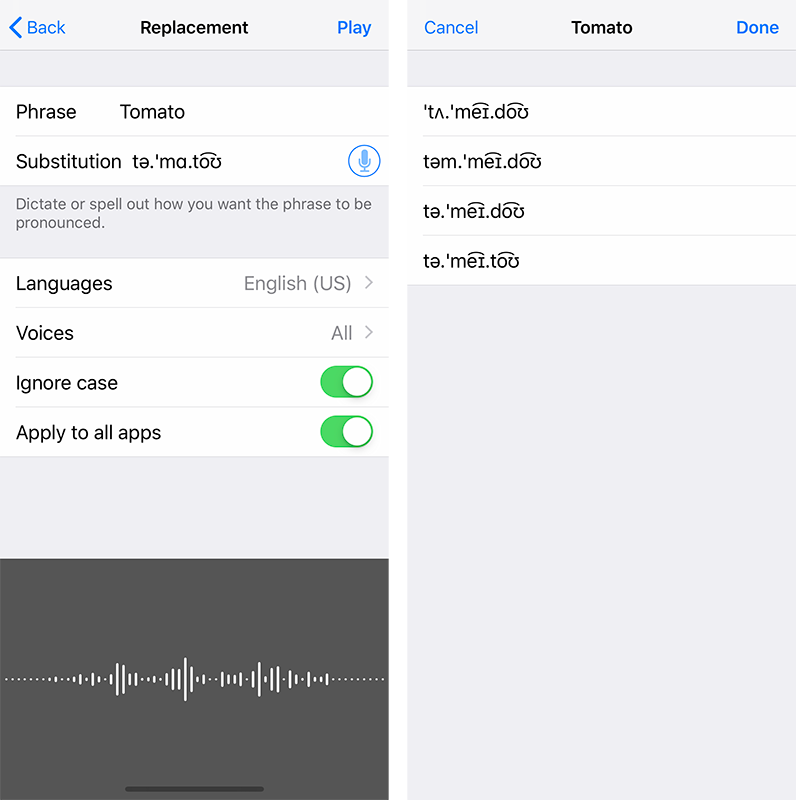

Of course, this property is undocumented at the time of writing, so you wouldn’t know that the IPA you get from Wikipedia won’t work correctly unless you watched this session from WWDC 2018.

To get IPA notation that AVSpeech can understand,

you can open the Settings app,

navigate to Accessibility > VoiceOver > Speech > Pronunciations,

and… say it yourself!

Hooking Into Speech Events

One of the coolest features of AVSpeech

is how it lets developers hook into speech events.

An object conforming to AVSpeech can be called

when a speech synthesizer

starts or finishes,

pauses or continues,

and as each range of the utterance is spoken.

For example, an app — in addition to synthesizing a voice utterance — could show that utterance in a label, and highlight the word currently being spoken:

var utterance#pragma mark - AVSpeech

Check out this Playground for an example of live text-highlighting for all of the supported languages.

Anyone who travels to an unfamiliar place returns with a profound understanding of what it means to communicate. It’s totally different from how one is taught a language in High School: instead of genders and cases, it’s about emotions and patience and clinging onto every shred of understanding. One is astounded by the extent to which two humans can communicate with hand gestures and facial expressions. One is also humbled by how frustrating it can be when pantomiming breaks down.

In our modern age, we have the opportunity to go out in a world

augmented by a collective computational infrastructure.

Armed with AVSpeech

and myriad other linguistic technologies on our devices,

we’ve never been more capable of breaking down the forces

that most divide our species.

If that isn’t universe-denting, then I don’t know what is.